Gaussian Quantized Variational Bayesian for Continuous and Discrete Generation

2024/11 - 2025/05

We propose a novel vector quantization method, GQ-VAE, which incorporates a dual latent space, regularized to a Gaussian distribution prior to quantization. This design proved highly effective, simultaneously mitigating both posterior and codebook collapse while offering the versatility to support downstream models operating on either continuous or discrete representations.

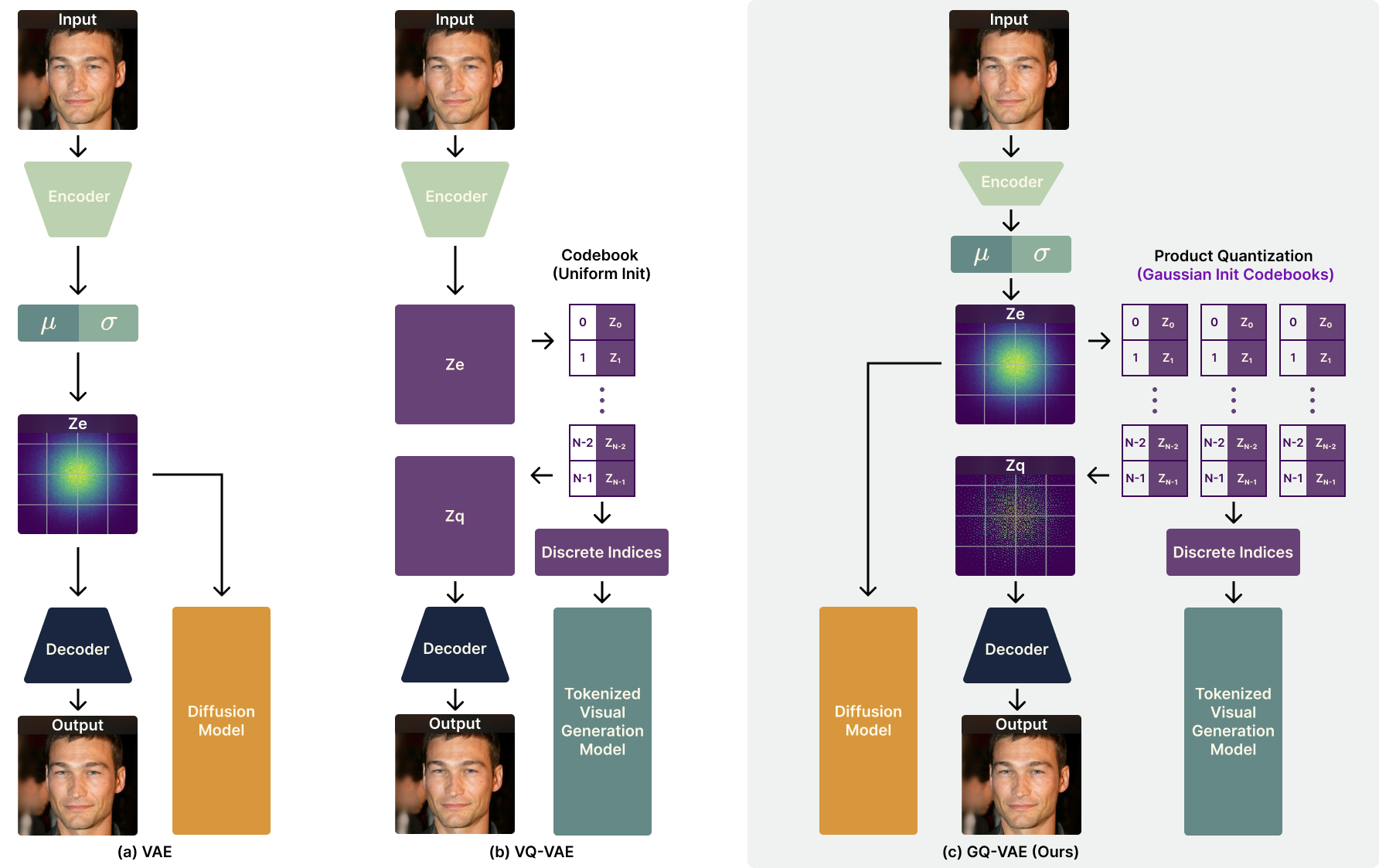

The architecture of our proposed GQ-VAE model.

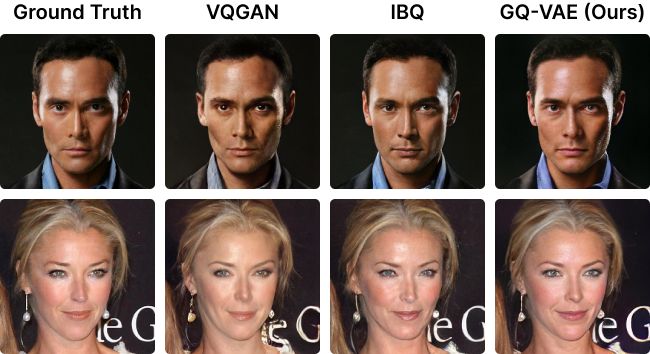

A comparison of image reconstruction results on CelebA-HQ dataset. From left to right: original images, reconstructions by VQGAN, reconstructions by IBQ, reconstructions by our GQ-VAE.

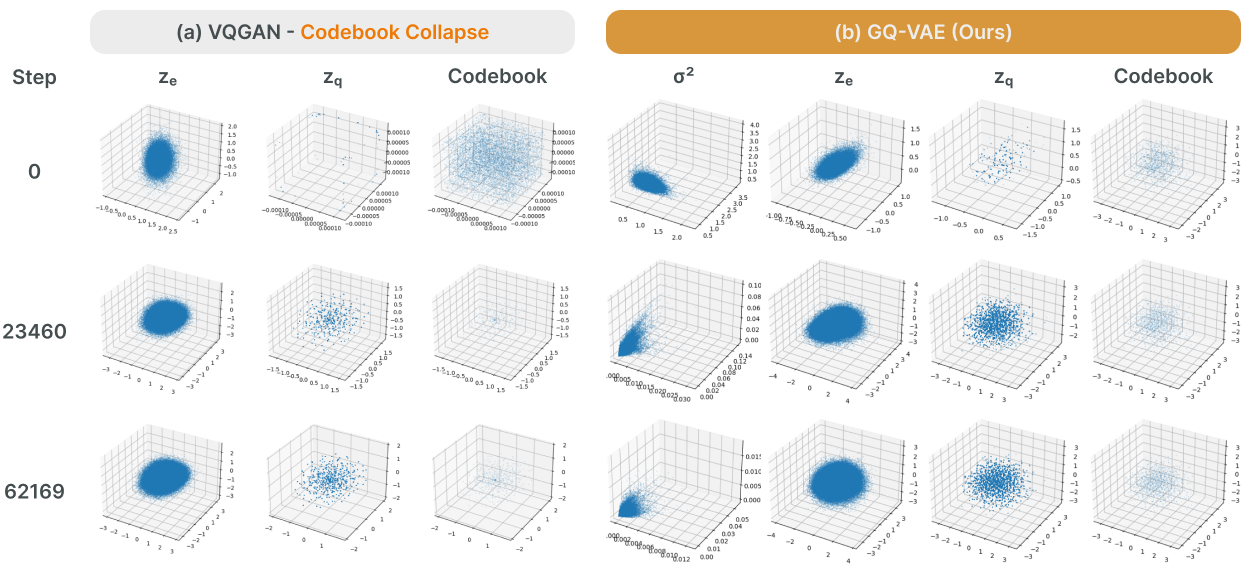

A comparison of latent space visualizations of VQGAN, and our GQ-VAE.

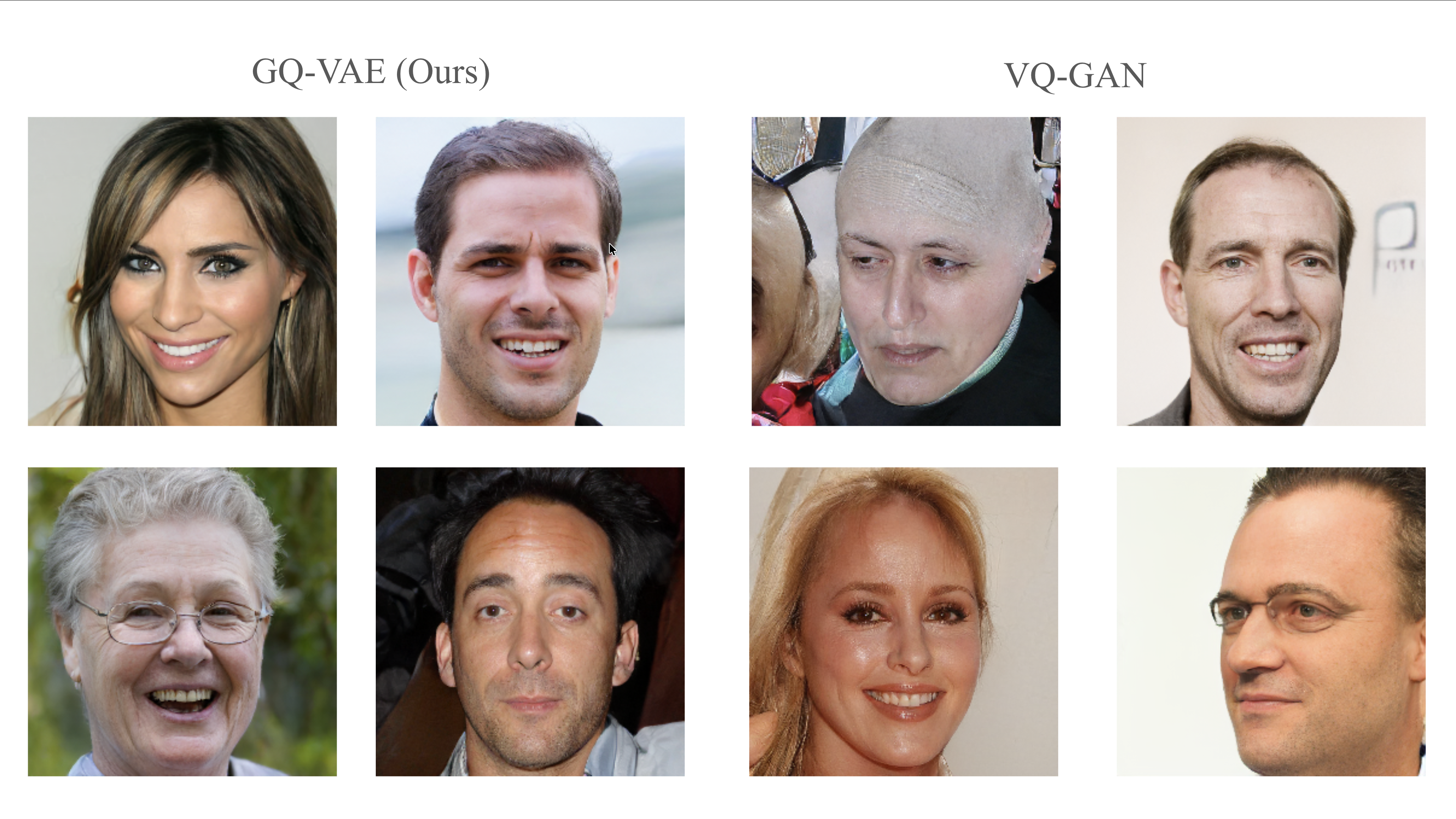

A comparison of auto-regressive image generation results on FFHQ dataset. From left to right: 4 samples from our GQ-VAE + second stage llama from IBQ, 4 samples from VQGAN.

Images of Model Architecture, Reconstruction Comparisons and Latent Space Visualizations credited to Yuanhe Guo.