projects

Research Projects

Syntax-Guided Cascade Discrete Diffusion for Text Generation

2025/06 - Current

Syntax-Guided Cascade Discrete Diffusion for Text Generation

2025/06 - Current

Work in progress...

Gaussian Quantized Variational Bayesian for Continuous and Discrete Generation

2024/11 - 2025/05

Gaussian Quantized Variational Bayesian for Continuous and Discrete Generation

2024/11 - 2025/05

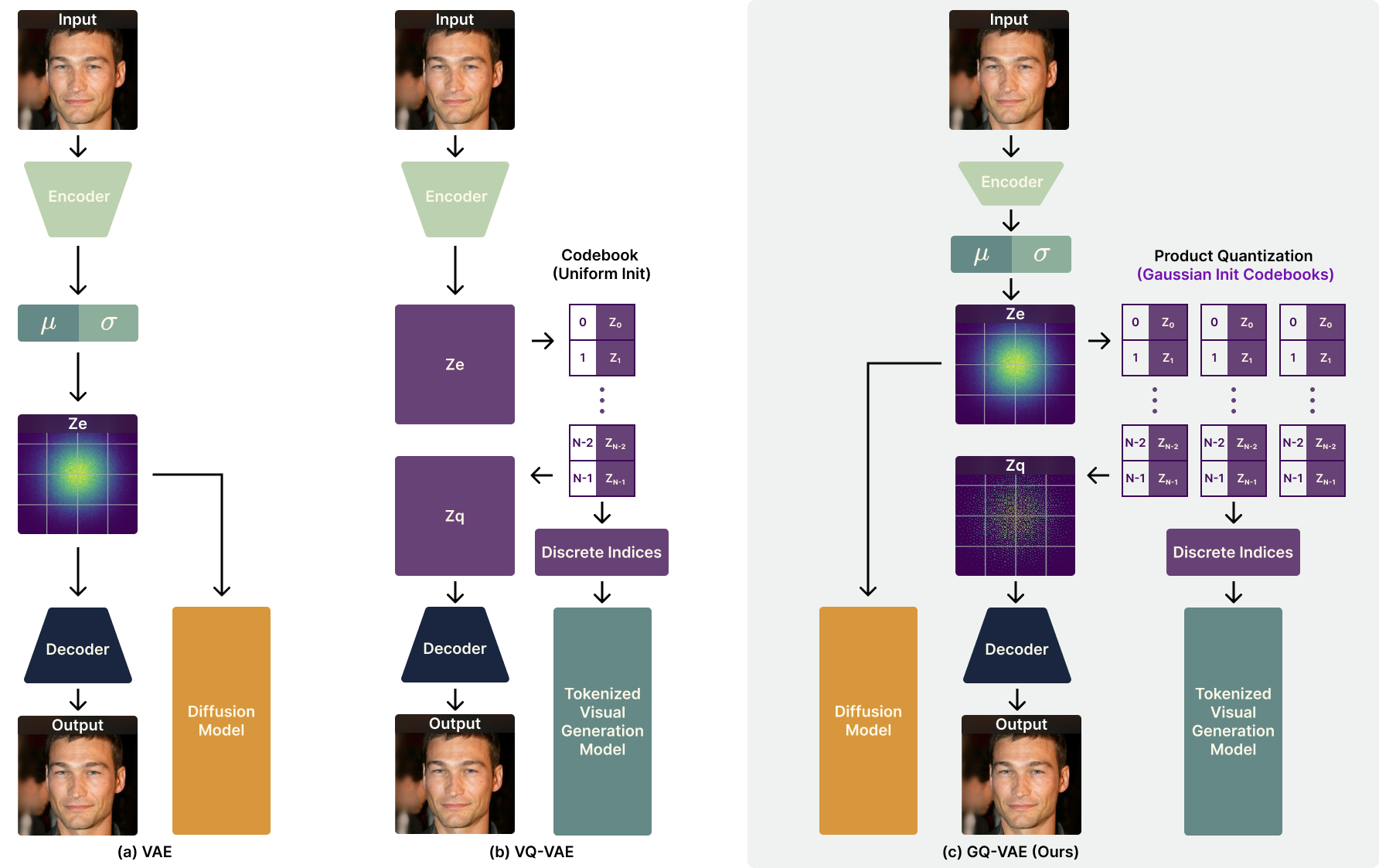

We propose a novel vector quantization method, GQ-VAE, which incorporates a dual latent space, regularized to a Gaussian distribution prior to quantization. This design proved highly effective, simultaneously mitigating both posterior and codebook collapse while offering the versatility to support downstream models operating on either continuous or discrete representations.

The architecture of our proposed GQ-VAE model.

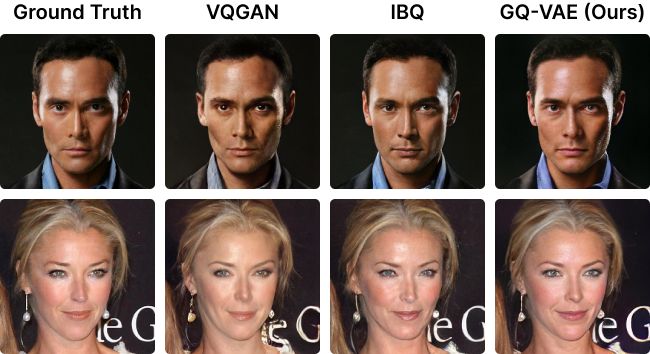

A comparison of image reconstruction results on CelebA-HQ dataset. From left to right: original images, reconstructions by VQGAN, reconstructions by IBQ, reconstructions by our GQ-VAE.

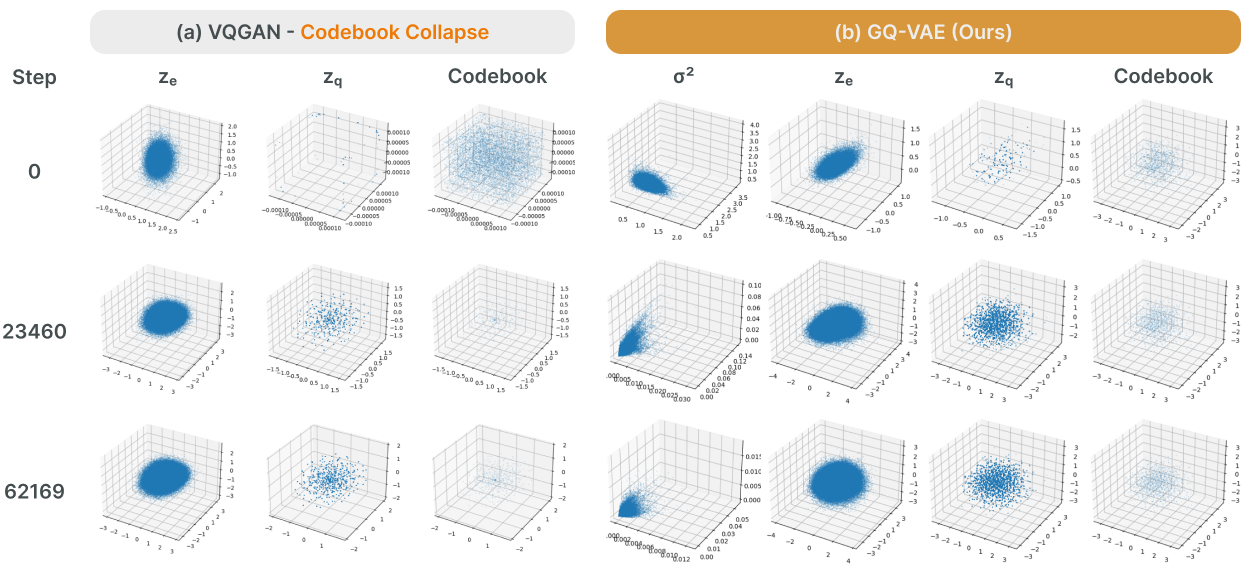

A comparison of latent space visualizations of VQGAN, and our GQ-VAE.

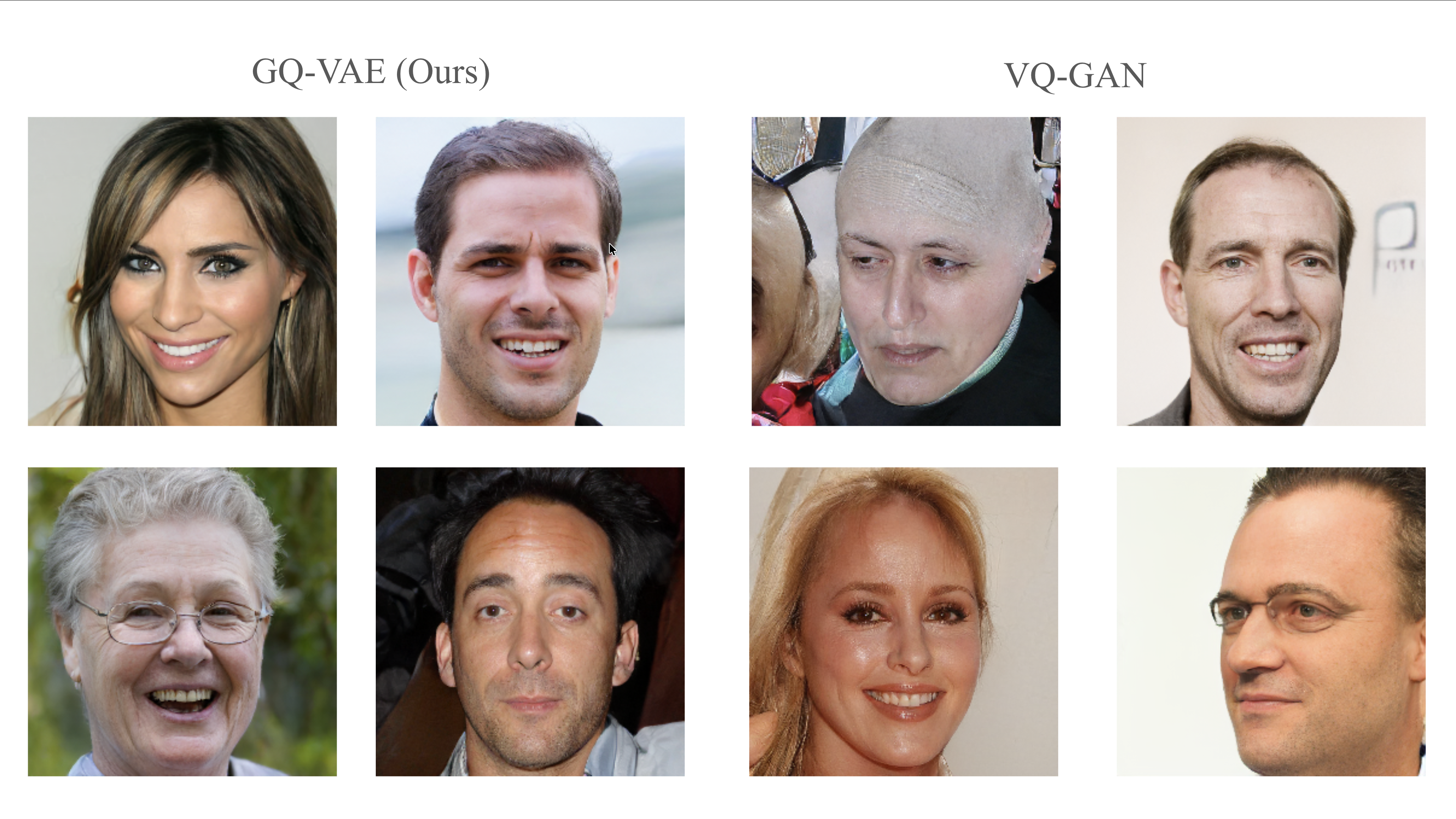

A comparison of auto-regressive image generation results on FFHQ dataset. From left to right: 4 samples from our GQ-VAE + second stage llama from IBQ, 4 samples from VQGAN.

Images of Model Architecture, Reconstruction Comparisons and Latent Space Visualizations credited to Yuanhe Guo.

Continuous Diffusion with VQ-VAE for Symbolic Music Generation

2024/06 - 2024/10

Continuous Diffusion with VQ-VAE for Symbolic Music Generation

2024/06 - 2024/10

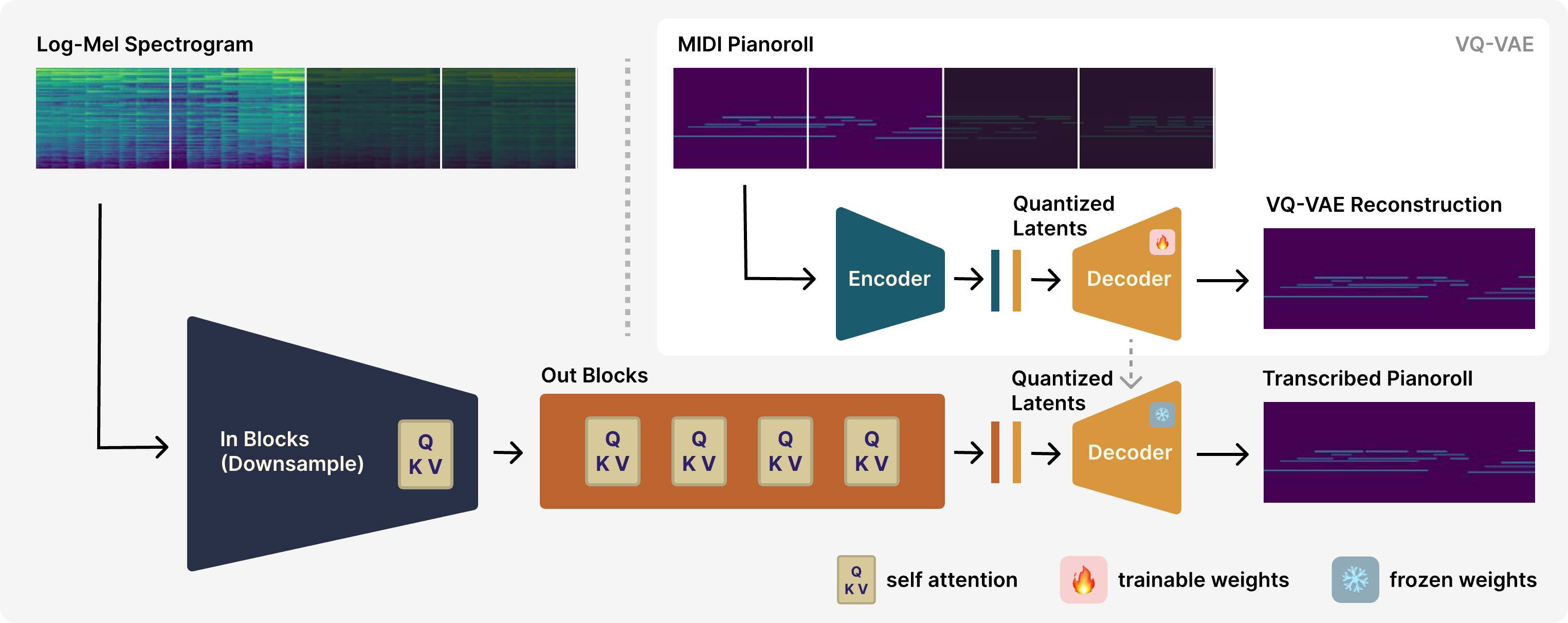

We introduce a novel framework that bridges the modality gap between continuous diffusion models and the generation of inherently discrete symbolic music. Our approach consists of two core components: a Mel Encoder that processes log-mel spectrogram inputs into a latent representation, and a Bar Tokenizer that maps piano rolls into a continuous latent space. This continuous embedding makes the discrete musical data amenable to any standard diffusion model for sampling and generation. A key innovation of our method is its focus on bar-level segmentation, a departure from conventional time-based splitting that allows for a more musically coherent representation.

An illustration of our proposed framework.

Images of Framework Illustration credited to Yuanhe Guo.

Course Projects

Audio Classification with Residual Network

CSCI-SHU 360 Machine Learning, Spring 2024

Advised by Shengjie Wang

Audio Classification with Residual Network

CSCI-SHU 360 Machine Learning, Spring 2024

Advised by Shengjie Wang

I use a custom-built neural network, which is an ensemble of 10 modified ResNet18 models, to classify voice types in song snippets. The system categorizes audio into four classes: no voice, a male-like voice, a female-like voice, or multiple voices, using Mel spectrograms as input features.